OpenAI Bites Back, Claude's Memory Lane, and Google Goes Wide

Hey friends 👋 Happy Sunday.

Here’s your weekly dose of AI and insight.

Every Wednesday, Signal Pro members get a step-by-step AI workflow they can apply immediately. No fluff, just practical guides to upskill you and your team. If you’re only reading the Sunday issue, you’re getting half the picture. Upgrade to paid today.

Today’s Signal is brought to you by MedOS.

Stanford-Princeton’s AI clinical co-pilot just went live in real hospitals. MedOS combines multi-agent AI reasoning, XR smart glasses, and collaborative robotics to work alongside clinicians in real time.

→ Now deployed at Stanford Blood Centre & Department of Pathology

→ Featured at NVIDIA GTC 2026

→ Near real-time XR response latency

MedOS has officially left the lab and entered real-world medicine.

Sponsor The Signal to reach 55,000+ professionals.

AI Highlights

My top-3 picks of AI news this week.

OpenAI

1. OpenAI Bites Back

OpenAI has released GPT-5.4, its most capable frontier model, unifying reasoning, coding, and agentic workflows into a single system built for professional work.

Knowledge work powerhouse: On GDPval, which tests AI performance across 44 occupations, GPT-5.4 matched or exceeded industry professionals 83% of the time, up from 70.9% with GPT-5.2. Spreadsheet modelling scores jumped from 68.4% to 87.3%.

Native computer use: GPT-5.4 is OpenAI’s first general-purpose model that can operate computers directly, achieving a 75% success rate on the OSWorld desktop navigation benchmark, surpassing human performance at 72.4%.

Smarter tool handling: A new tool search feature lets the model pull in tool definitions only when needed, cutting token usage by 47% in multi-tool workflows. The model also supports up to 1M tokens of context, enabling agents to plan and execute across longer tasks.

Alex’s take: I asked my audience how GPT-5.4 stacks up against Anthropic's Opus 4.6 with extended thinking. The consensus was that GPT-5.4 is a workhorse, not a thoroughbred. Power users say it's faster and more usable than previous GPT models, with genuinely improved writing and instruction-following. But for deep research and complex reasoning, Opus 4.6 still holds the edge. One individual described GPT-5.4 as what you reach for when you want to save your precious Opus tokens, not replace them. Real-world utility matters more than benchmark bragging rights.

Anthropic

2. Claude’s Memory Lane

Anthropic has launched a memory import feature for Claude, letting users transfer their entire personalisation history from ChatGPT, Gemini, or Grok in under 60 seconds.

How it works: Users copy a ready-made prompt from Claude’s settings, paste it into their existing AI provider, then feed the exported response back into Claude, transferring preferences, role context, projects, and communication style in four steps.

Switching costs eliminated: Claude hit #1 on the App Store this week while ChatGPT uninstalls surged 295%. The memory import feature directly targets the biggest barrier to switching: losing months of accumulated context with a rival provider. Anthropic also made memory free for all users, having previously restricted it to paid subscribers.

Voice mode comes to Claude Code: Separately, Anthropic began rolling out voice mode in Claude Code, its developer-focused coding tool. Currently live for ~5% of users, developers can type “/voice” and issue spoken commands to refactor code, debug, or dictate changes hands-free. OpenAI’s Codex shipped its own voice mode just one week earlier.

Alex’s take: Memory portability is a smart competitive move. Most people don't switch AI assistants because they've spent months training one to understand how they work. Anthropic just made that history transferable. The real play here is compounding utility, where the more Claude learns about you, the harder it becomes to leave. Anthropic lost the $200M Pentagon contract to OpenAI after refusing to allow its tech for mass surveillance and autonomous weapons. Instead of chasing government deals, they're now winning consumers. The App Store rankings and the ChatGPT uninstall wave suggest that bet is paying off. And Anthropic is fighting on every front simultaneously, consumer, developer, and enterprise.

3. Google Goes Wide

Google shipped three notable updates this week across developer tools, research, and its model lineup. A new Workspace CLI for AI agents, cinematic AI-generated videos from NotebookLM, and a cheap new model for developers building at scale.

Workspace goes agent-ready: Google open-sourced a new tool that lets AI agents plug directly into your Gmail, Calendar, Drive, Sheets, Docs, and Chat. Think of it as giving any AI assistant a set of keys to your entire Google Workspace. It ships with 100+ pre-built skills for common tasks like reading emails, scheduling meetings, organising files, and editing spreadsheets. One install and your AI tools can talk to all your Google apps natively, no middleware required.

NotebookLM goes cinematic: NotebookLM launched Cinematic Video Overviews, upgrading its video feature from basic narrated slideshows to fully animated, documentary-style explainer videos. The system combines Gemini 3, Nano Banana Pro, and Veo 3, with Gemini acting as creative director over narrative, pacing, and visual style. Currently exclusive to Google AI Ultra subscribers ($250/month) with a cap of 20 videos per day.

Gemini 3.1 Flash Lite: Google released its most cost-efficient model yet at just $0.25 per million input tokens and $1.50 per million output tokens. It delivers 2.5x faster time-to-first-token and 45% higher output speed than Gemini 2.5 Flash. A standout feature is adjustable “Thinking Levels” (Minimal to High), letting developers control reasoning depth per request to balance speed against accuracy.

Alex’s take: The Workspace CLI is the one to watch. Any startup whose main value prop is "we connect to your Google stuff and do X" just got their moat erased by a single free download. When your productivity suite has a native agent interface with 100+ pre-built skills, the middleware layer that Zapier and Make have been charging for starts to look very thin. Google isn't winning the AI race on any single front. They're dominating on breadth right now.

Content I Enjoyed

Anthropic Measured AI’s Impact on Jobs

Anthropic published a research paper this week measuring AI’s real-world impact on the labour market, using its own usage data from Claude.

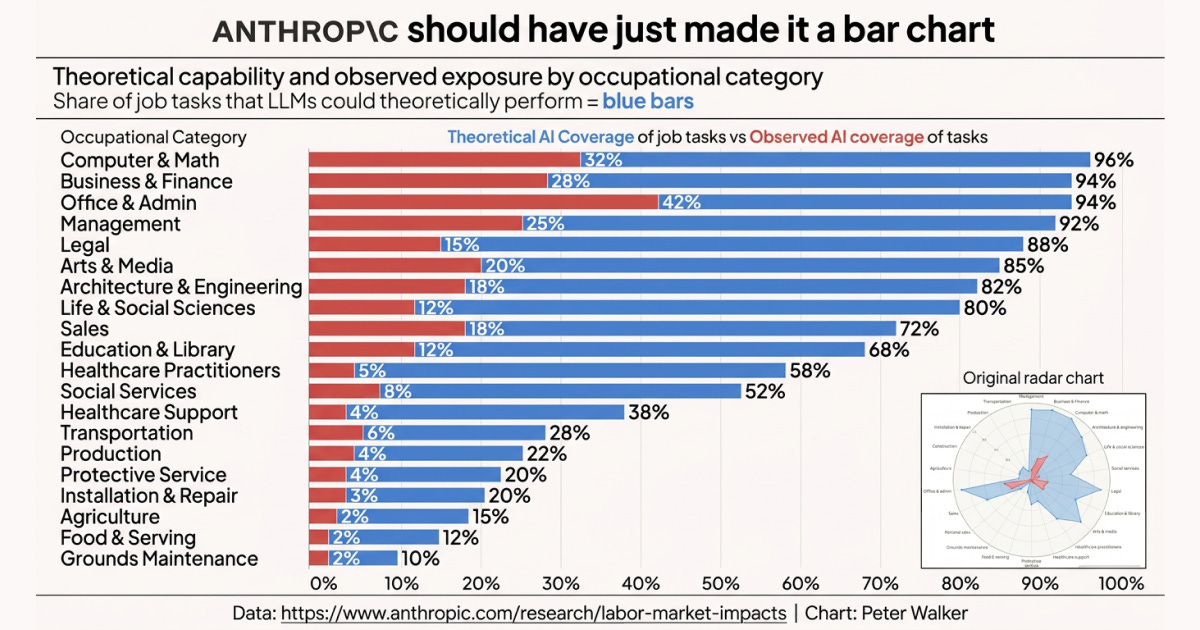

The report’s key visual was a radar chart comparing theoretical AI capability against observed real-world usage across occupational categories. However, I much preferred Peter Walker’s (Head of Insights at Carta) horizontal bar chart (pictured above). I think it’s far easier to actually extract trends from. The gap between what AI could automate and what it is automating becomes immediately obvious.

The “observed exposure” metric combines theoretical AI capability with real-world professional usage. In Computer & Math roles, LLMs could theoretically handle 94% of tasks, but Claude currently covers just 33%. The bottleneck here isn’t capability. We know how great models are at solving technical problems. Legal constraints, verification requirements, and slow enterprise adoption are the real bottlenecks holding back adoption today.

Under the “Most exposed occupations” table, computer programmers top the list with 75% task coverage, followed by customer service representatives and data entry keyers. Yet there’s a deep irony here that I think is worth unearthing. Programmers are both the most exposed occupation and the heaviest adopters of AI tools. They’re the ones actively building and using the technology that automates their own work.

The workers most at risk overall skew older, female, more educated, and higher-paid, earning 47% more on average than their unexposed counterparts. People with graduate degrees are nearly four times more represented in the most exposed group.

Despite all this exposure, there’s been no meaningful increase in unemployment for high-risk workers since ChatGPT launched. The disruption isn’t showing up as layoffs, but rather as a hiring freeze. Entry into exposed occupations among 22-25 year olds has dropped roughly 14%, with no equivalent decline for workers over 25.

At the other end of the spectrum, 30% of workers have zero AI exposure. These are cooks, bartenders, motorcycle mechanics, lifeguards. The roles AI can’t touch are almost entirely physical.

I think it’s important to have a living measure that tracks how the gap between AI’s theoretical capability and real-world adoption narrows over time. That gap is where the next wave of disruption lives.

Idea I Learned

One Page of Questions Worth $5.5 Billion

In January 2024, Daniel Gross, then CEO of Safe Superintelligence and now head of AI product at Meta, published a single-page document titled “AGI Trades.” No predictions, just 18 questions about what happens if AI progress doesn’t stop.

Two years have passed since this blog article went live. NVIDIA has tripled its revenue to $215.9 billion and added $3.2 trillion in market cap. Copper surged from $3.75 to $6.61 per pound (an all-time high). Energy stocks like Vistra returned 321%, making it the second-best performing stock in the S&P 500 in 2024. GPT-4-equivalent inference costs collapsed 50x in three years. Indian IT firms shed 58,000 employees after adding 360,000 in the two years prior.

The person who may have read those questions most carefully was Leopold Aschenbrenner. OpenAI fired him at 22 for raising safety concerns. By 23, he’d launched a hedge fund. Today, at 25, he manages $5.52 billion in equity exposure across 29 positions, one of the fastest-growing funds Wall Street has ever seen.

His largest holding is a $876 million position in Bloom Energy. A fuel cell company that generates electricity on-site from natural gas. Gross asked in January 2024: “If it does become an energy game, what’s the trade?” Aschenbrenner clearly followed through.

Something worth noting: Aschenbrenner is married to the chief of staff at Anthropic, and his 13F filings are delayed by 1-3 months, so I wouldn’t recommend using this as a shopping list to copy.

But the broader lesson holds. The people who got rich from AI were the ones who understood watts, copper, and transformers with three-year lead times. This is the physical infrastructure that is critical for AI to run. And they understood this long before anyone was debating whether ChatGPT or Claude was the superior chatbot.

Quote to Share

I published this X post on Friday. Needless to say, it resonated with a few folks:

Over the last week, there’s been a new energy in the air. One that I would say isn’t immediately familiar this side of the pond, yet, when recognised, is immediately captivating.

Whilst the sun’s appearance on Thursday afternoon in central London (helping us enjoy some after-work drinks) may have been a contributing factor, I think it has been a type of energy that has been compounding over the last 18 months. Let me explain.

London is quietly becoming the most important AI city outside of San Francisco. And when I say quietly, I mean the opposite. It happened way faster than I thought. If you’ve been paying attention to the announcements in my X post, you’ll notice something. Every major AI lab on the planet is now hiring aggressively in London.

And the energy on the ground has caught up. Meetups are packed. Builders are swapping ideas in pubs. People are genuinely excited.

At the same time, however, I think it’s important we address the pushback and not get too starry-eyed. These are American companies. They’re setting up satellite offices vs transitioning headquarters. This raises a very real question about whether London becomes a place, let alone the place, where frontier AI gets built, or just a talent extension of Silicon Valley.

Even still, we might be missing the bigger picture.

Silicon Valley didn’t start as Silicon Valley. It started as orchards and military contracts. Stanford’s dean of engineering, Fred Terman, convinced a few companies to set up shop nearby. Hewlett-Packard built test equipment in a garage. Fairchild Semiconductor got its first real revenue from defence contracts building chips for NASA. The ecosystem didn’t arrive fully formed. It compounded. One anchor attracted talent. That talent attracted capital. That capital attracted more talent. Repeat for 60 years.

London’s version of that story starts with one decision that doesn’t get enough credit.

When Google acquired DeepMind in 2014, Demis Hassabis turned down a bigger offer from Mark Zuckerberg. He chose Google partly because they agreed to let DeepMind stay in London. It’s tough to truly comprehend the amount of pressure that must have come with that decision. A $500M acquisition by the world’s most powerful tech company, and he insisted on keeping the lab where it was. No relocation to Mountain View, nor a slow migration of the best researchers to California. London.

That single decision built the first pillar. DeepMind became the anchor. It trained a generation of world-class AI researchers who lived, worked, and built their networks in London. And now, when OpenAI, Anthropic, xAI, and Microsoft are all competing for AI talent in the same city, they’re drawing from a pool that exists because Demis held the line.

Every ecosystem needs its Fairchild moment. I think DeepMind staying in London was ours.

The question now is what we do with the momentum.

Question to Ponder

“I feel like I’m falling behind with AI. There’s so much happening and I don’t know where to start. What should I do?”

I hear this all the time. And it’s totally understandable.

New tools are launching daily, and there are headlines about breakthroughs every single week. It feels like feeding from a firehose.

The mistake I see a lot of people make is trying to keep up with everything. You don’t need to keep up with everything. You just need to start with one move.

Open Claude. Tell it everything about your job. Your responsibilities, your weekly tasks, the problems that eat up your time. Give it the full picture. Then ask it how AI can actually help you do your job better.

That’s it. That’s step one.

You’ll be amazed at how quickly you go from “I’m overwhelmed” to “oh, I can actually use this.”

From there, carve out a small window each week to stay curious. Ask Claude what’s new in AI. What tools people are talking about. What’s changed since last week. Then go play around with whatever catches your eye.

This creates a rhythm. You learn what’s happening, then you learn how to apply it. News and application. That balance is everything.

Mistake no.2 most people make is trying to learn AI by “hoarding resources”. Reading articles, watching videos, and collecting bookmarks they’ll never revisit.

The fastest path forward is using AI to solve a real problem you already have. Start there, and the rest follows.

To be honest, this is exactly why I write this newsletter each week and structure it the way I do. Sunday gives you the news (what’s happening, what matters, what to pay attention to), and Wednesday gives you the workflows (how to actually apply it to your work).

You stay informed AND take action. That’s the game.

Already a subscriber? Get your whole team on board. Signal Pro group subscriptions give everyone access to weekly AI workflows and tutorials, practical upskilling that pays for itself. It’s the kind of thing L&D budgets were made for. Share this with your manager today.

💡 If you enjoyed this issue, share it with a friend.

See you next week,

Alex BanksP.S. 3 years of AI progress.

the london point you made here was totally off my radar. really interesting material here. thanks!