Microsoft’s Edge Over the Web, OpenAI Goes Back to School, and Google Goes Deep

Hey friends 👋 Happy Sunday.

Here’s your weekly dose of AI and insight.

Today’s Signal is brought to you by Opera Neon.

Neon doesn’t just chat—it autonomously executes. Kick off a complex prompt, close your laptop, and return to a finished report, game, or dataset hosted in the cloud. Welcome to agentic multitasking, only in Opera Neon.

Sponsor The Signal to reach 50,000+ professionals.

AI Highlights

My top-3 picks of AI news this week.

Microsoft

1. Microsoft’s Edge Over the Web

Microsoft has launched Copilot Mode, an experimental AI-powered browsing experience in Edge that transforms how users interact with the web.

Multi-tab context awareness: Copilot can see all your open tabs with permission, enabling better comparisons and faster decisions without constant tab switching.

Natural voice navigation: Users can speak directly to Copilot to locate information, open comparison tabs, or navigate pages with fewer clicks and keystrokes.

Anticipatory assistance: The browser anticipates user needs, organising browsing into topic-based "journeys" with next-step suggestions.

Alex’s take: We’re seeing browsers fundamentally transition from search engines → answer engines → action engines. Gone are the days of having to trawl through pages of search results. Commands are the future. They are the direct input to arrive at the outcomes we sought in the first place, such as booking a hotel or ordering food. I’m interested in watching Microsoft’s bet develop as browsers become collaborative (and proactive) assistants.

OpenAI

2. OpenAI Goes Back to School

OpenAI has launched “study mode” in ChatGPT, a learning experience designed to guide students through problems step-by-step rather than simply providing quick answers.

Interactive learning: Uses Socratic questioning, hints, and self-reflection prompts to encourage active participation and critical thinking instead of giving direct solutions.

Scaffolded responses: Information is organised into digestible sections with appropriate context, reducing cognitive overload for complex topics.

Personalised support: Tailors lessons to individual skill levels based on assessment questions and previous conversation history.

Knowledge checks: Includes quizzes and open-ended questions with personalised feedback to track progress and support retention.

Alex’s take: The introduction of this mode reminded me of my own struggles in school. I had to retake a year because the classroom moved too fast for me. What’s more, I was reluctant to ask questions for fear of “looking silly” in front of my peers. The integration of a “study mode” into LLMs solves exactly what I needed back then, through the form of a patient, always-on personal tutor that’s available 24/7, adapting to your individual learning style. You can ask as many questions as your heart desires without fear of reproach.

3. Google Goes Deep

Google has launched Deep Think in the Gemini app for Google AI Ultra subscribers, bringing advanced reasoning capabilities through their latest breakthrough in AI thinking.

Parallel thinking techniques: Deep Think generates multiple ideas simultaneously and refines them over time, mimicking how humans tackle complex problems by exploring different angles.

IMO gold-medal performance: Built on the same model that achieved gold-medal standard at the International Mathematical Olympiad, though the consumer version is optimised for speed and daily usability.

Extended reasoning time: By increasing "thinking time," the model can explore different hypotheses and arrive at more creative solutions to complex problems.

Alex’s take: £234.99/month for Google’s most advanced reasoning model is a steep price to pay. But it’s clear the cost of intelligence commands a premium. Compared to other models without tool use, it achieves state-of-the-art performance across “LiveCodeBench V6”, which evaluates competitive code performance and “Humanity’s Last Exam”, a challenging benchmark that measures a model’s expertise in different domains, including science and math. I’m excited to see how this parallel thinking approach helps advance research and work in fields that require expert creativity and strategic decision making.

Content I Enjoyed

A Message From Mark

Mark Zuckerberg released a manifesto this week outlining Meta’s vision for “personal superintelligence” as well as an accompanying video.

Something that stood out to me was his pointed critique of competitors who believe “superintelligence should be directed centrally towards automating all valuable work, and then humanity will live on a dole of its output.”

This is clearly a shot at OpenAI's Sam Altman, who has openly discussed AI replacing jobs and leading to universal basic income.

Instead, Zuckerberg envisions AI more heading down the Steve Jobs route of becoming a “bicycle for the mind”, leading to personal empowerment tools that help you "achieve your goals, create what you want to see in the world, experience any adventure, be a better friend to those you care about, and grow to become the person you aspire to be.”

Four years ago, Meta announced the metaverse and even named their whole company after going all in on the “vision”. Maybe we should expect an imminent name change, or maybe this time it’s different.

It’s clear Mark’s conviction is unwavering, across a $14.3B acquisition for 49% of Scale AI as well as $100M+ pay packages for poaching top engineering talent from its competitors. Even recently, he dangled a $1.5B pay package (across 6 years) to an Andrew Tulloch, Co-Founder and leading researcher of Mira Murati’s (ex-OpenAI CTO) startup “Thinking Machines”.

Andrew declined. An incredible flex, and an incredible signal to demonstrate just how scarce true systems architecture expertise has become in the current AI race.

Given that Andrew worked at Meta before as an engineer, maybe he knows something we don’t. That the $1.5B is delusional and working under Zuck lacks the meaning generated from other frontier companies.

As we watch superintelligence play out, will it be a centralised utility that replaces human work, or a personal tool that amplifies human potential? As Zuck notes, "the rest of this decade seems likely to be the decisive period" for determining this path.

Idea I Learned

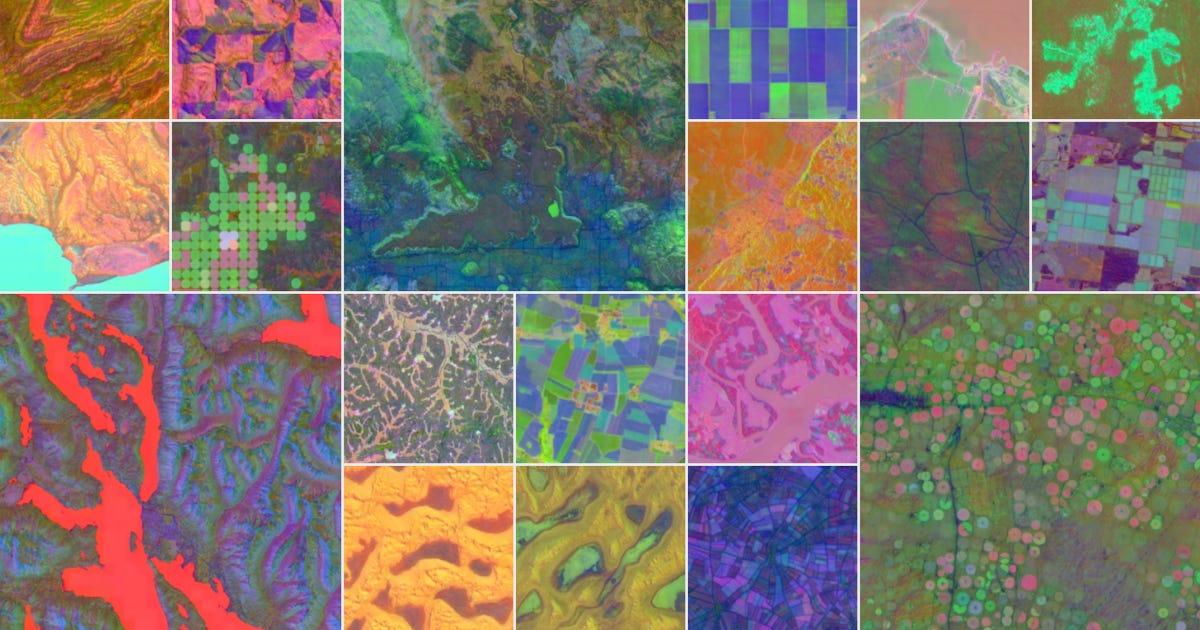

AI Can Now Map Our Planet in Unprecedented Detail

I’ve been fascinated with maps since I was a kid.

So after seeing Google DeepMind’s release of AlphaEarth this week, my interest was immediately piqued.

It’s an AI model that works like a "virtual satellite" to map our planet in unprecedented detail.

It combines petabytes of Earth observation data from dozens of sources (optical satellite images, radar, 3D laser mapping, and climate simulations) into a unified digital representation.

The breakthrough is in how it processes this information. The AI creates compact summaries for every 10x10 meter square of Earth's land and coastal waters, requiring 16 times less storage space than other AI mapping systems.

It can even see through persistent cloud cover and map areas that are notoriously difficult to image, like Antarctica. What’s more, it has the ability to reveal agricultural plots in various stages of development and detect land use variations invisible to the naked eye.

Over 50 organisations are using this technology to classify unmapped ecosystems, track deforestation, and monitor agricultural changes, all with 24% higher accuracy than existing methods.

This feels like a glimpse into the future of environmental monitoring. Tapping into a continuous, detailed view of how it’s changing in real-time.

As a slight aside, this breakthrough reminded me of this X post. Instead of fearmongering or throwing around huge sums of money like its competitors, I love Google because they do fun stuff, like fine-tuning LLMs on dolphin sound data, or building an AI model to simulate how a fruit fly works. This is probably why out of all the frontier company CEO’s, Demis Hassabis is the only one with a Nobel prize.

Quote to Share

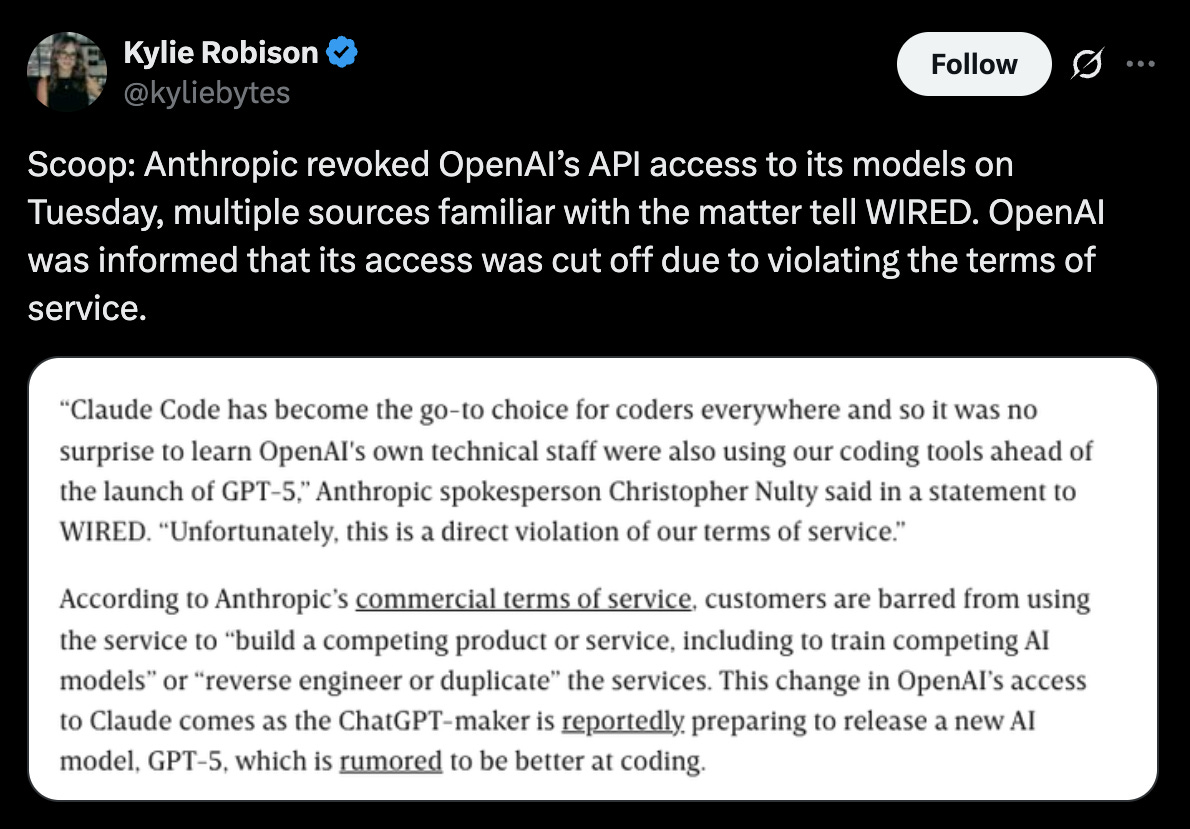

Kylie Robison on Anthropic revoking OpenAI's API access:

This week, Anthropic cut off OpenAI’s API access to Claude models after discovering OpenAI’s technical staff was heavily using Claude Code ahead of launching their upcoming GPT-5 model.

This is obviously a clear violation of Anthropic’s terms of service that prohibit using their models to build competing products, but OpenAI's response is important to highlight:

"It's disappointing considering our API remains available to them." The relationship feels rather asymmetric; perhaps OpenAI may reconsider its own API policies after all.

A move like this isn’t new for Anthropic. Just last month, they restricted AI coding startup Windsurf’s access to its models after rumours circulated that OpenAI was considering acquiring it.

Looking past the squabble, my interest lies in how this will shape the future. Frontier models will be relentlessly competitive given the cost of intelligence trends lower, margins become smaller, and differentiation becomes harder.

At the same time, the AI orchestration layer becomes neutral. Startups on the application layer get the best of the picking and choose whichever model is most performant.

LLM providers will turn into utility companies. Startups can aggregate the best capabilities from each frontier model while the giants fight each other.

It’s clear that playing friendly isn't the answer when racing to AGI. This is war.

Source: Kylie Robison on X

Question to Ponder

“Given the massive commoditisation of knowledge and creative work, the question for (a lot) of us is how we remain valuable now that core activities become instantly available 24/7?”

I believe humans are, and will remain, critical for orchestration. Right now, AI doesn't have all the context, and we have to tie up these systems and connect them in ways to create meaningful workflows.

There’s a huge opportunity to create enormous value in this domain right now, both as an individual and more so, acting as an individual on behalf of an organisation. Think tool stacking, layering, and combining, where the sum is greater than the parts.

As we continue to ride the exponential of this S-curve, I think we’ll see new jobs emerge (in the medium term) before long-term underlying economics have to shift (such as UBI) to meet the result of the cost of intelligence plummeting to near-zero.

Got a question about AI?

Reply to this email and I’ll pick one to answer next week 👍

💡 If you enjoyed this issue, share it with a friend.

See you next week,

Alex Banks

Both beneficial and insightful utilizing best ways to keep improving my research and project and source stacking most appreciated. Thank you.

Have you tried edge with copilot? Like it enough to consider using it as your primary? Edge has been an afterthought for a while now